Our team works on making sure that these projects explores on what's possible about XR. From software development to creating a full haptics systems, we bridge the gap between the digital and the physical through touch and sensation, our work spans the full spectrum of extended reality. We believe that the most groundbreaking experiences emerge at the intersection of disciplines, which is why our team brings together diverse expertise to explore, experiment, and redefine what XR can be.

Arm to restrict movement in certain scenarios in VR. The main concept/idea is that the arm reacts to the environment. If we touch a wall, for example, it can restrict your arm to provide realistic feedback.

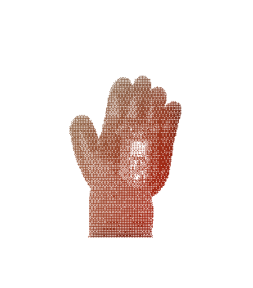

Part of the larger haptics project. These wearable gloves will enable tracking of each finger's pose, alongside force-feedback for touching objects. This will allow users to feel the actions of picking things up and the actual firmness of the object. Finger tracking is enabled by hall-effect sensors and force-feedback is controlled via low-power servos. A microcontroller communicates with a PC driver to control the gloves.

This project is a wearable device mounted beneath a VR headset that delivers scents directly to the user's nose. It uses electronically controlled fans and atomizers (converts liquid into vapor) to synchronize smelling with the virtual reality environment in real time.

An all-in-one device responsible for mimicking natural movements whilst keeping its user in place.

This project is exploring alternative VR input methods, and are currently trying to create a more generalizable BCI controller for VR applications.

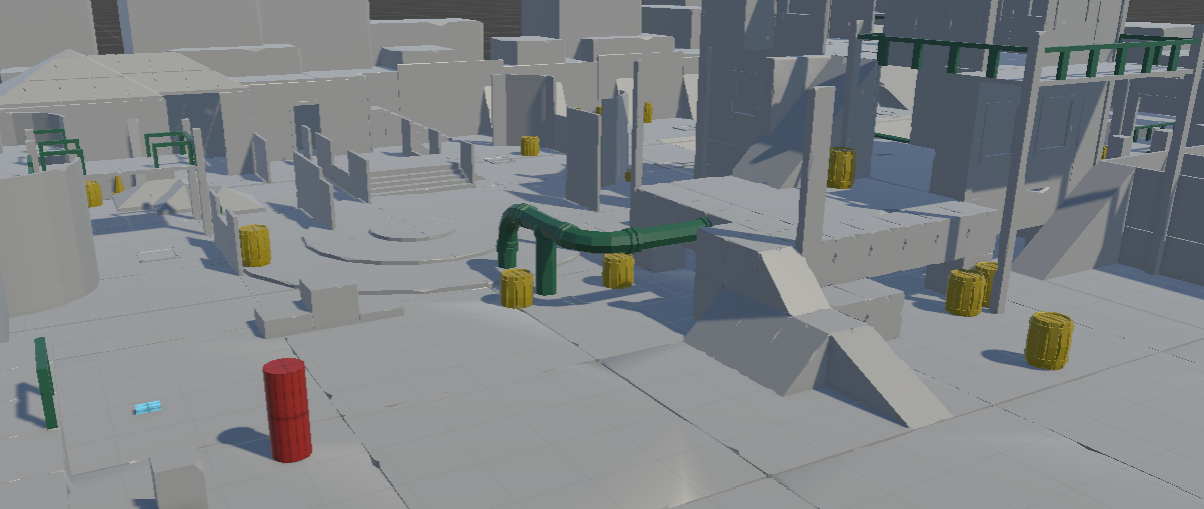

An experimental stealth-horror game made in Unity that tests different novel mechanics. We are currently creating a demo level that will be complete and available for public playtesting by the end of the semester.

A Rokid smart-glasses and Android companion app that helps you find lost items by recalling the last time they were seen and describing the surroundings. We use a persistent memory system to recall where objects are and track hand movements using a YOLO model to see where objects are placed.